The approach presented here has been developed by the author for the diploma thesis in 2009 and has further been published as full paper at the WSCG conference in Feb. 2010.

Concept

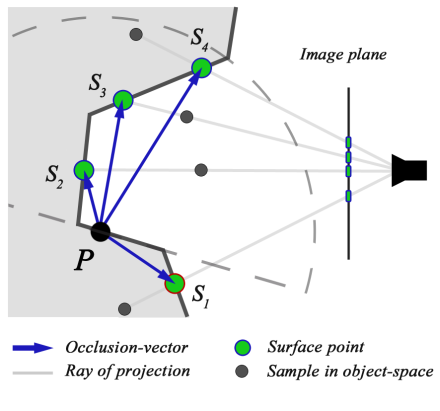

Referring to the definition of AO, occlusion at a point of interest P is caused by any surface point within its visible hemisphere, as this represents all directions of incoming light for P. This approach is derived from that background why occluders are determined by checking their position to be actually within the hemisphere of P. Having a set of sampling positions on the image plane, we read the respective depth values from the linear z-buffer and further un-project the sample points into object-space. This gives us a set of surface points representing potential occluders. For any of them we finally create a so called occlusion-vector v, pointing from P to the respective occluder candidate. An occluder is found if the angle between the surface normal at P and v is less than 90°, checked by a simple dot-product.

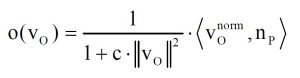

The final occlusion value o(v) is determined considering the length of v. This idea is straightforward, as long vectors representing distant objects occlude less than short vectors pointing to nearby objects. We apply a function similar to the one usually being used in computer graphics to model the attenuation of light:

The strength of the quadratic attenuation is controlled by an artistic parameter c. In addition a cosine weight expressed by the attached dot product is applied. This is based on [Lan02a] who applies Lamberts law on the concept of ambient occlusion and concludes that the occlusion is maximal for surface points along the direction of the normal.

The utilization of occlusion-vectors is a simple but effective technique. We correctly ignore surface points not contributing to occlusion, represented by occlusion-vectors pointing outside the hemisphere. This enables us to detect fully unoccluded surface points and therefore avoids false shadowing effects on planar faces or the highlighting of edges, e.g. occurring in [Kaj09a]. For the same reason, our approach avoids the self-occlusion problem described by [Fil08a]. Finally, we acquire an enhanced visual quality of the AO with our approach. This is a result of a higher sample density effectively used for the occluder detection. This issue is clearly illustrated in the concept image, as we obtain two valid occluders for the sample points S2 and S3 in contrast to the approaches of [Kaj09a, Fil08a]. Those approaches would have even detected an occluder for sample S1, actually not being within the hemisphere, as they only check its position to be inside of geometry. Finally the length of the occlusion-vector is a more effective measure for the occlusion strength than the view-direction dependent depth deltas used by [Fil08a]. Hence, we are able to properly represent the distance from the point of interest to the respective occluder.

Sampling technique

Correctly sampling the vicinity of a surface point forms the basis for a well approximated occlusion value. Regarding the space wherein the samples are created, object and image space sampling can be distinguished. Object space sampling uses a set of 3D offset-vectors to sample the environment of the point of interest [Mit07a][Fil08a], while image space sampling utilizes 2D vectors to directly create sample position on the image plane [Bav08a]. The latter makes the back-projection of sample points redundant and offers direct access to the depth- and normal-buffer.

However, we obtain an enhanced visual quality with object space sampling due to a better distribution of the sample positions.

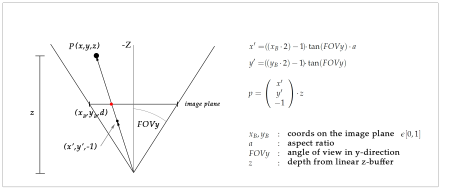

The sampling is performed as follows: First the object space position of the current pixel p has to be determined by unprojecting p. This is done with the help of the respective depth value from the linear z-buffer. The mathematical background is illustrated in the next figure. Besides the screen space coordinates of p also the aspect ratio of the image plane a and the field of view (FOVy angle) in y-direction is needed.

The idea of unprojection is here to create a vector with depth of -1.0 that points to the position of p on the image plane and multiply this vector by the linear z-value to get the object-space position. The inversion of the viewport transformation (OpenGL) is covered by this computation. I want to point out that the coords on the image plane have to be in interval of [0,1][0,1] and not [0,resolutionX] [0,resolutionY]. This is not the only way of doing an unprojection but it is computationally not expensive and intuitive.

We further use the given object space position P to add 3D offset-vectors and hence create sample points in 3D space around P. These points have to be projected to image space to read the respective depth value from linear z-buffer. This can be done by computing the unprojection from above in the reverse order. The yielded depth value is used to compute the object space position of the point which is represented by the depth value. This point is located on the underlying projection ray through this sample point. So a further unprojection using the depth value is done to get the object space position. The computation therefore can be optimized by using the projection ray from afore projection step and just bringing it to the length indicated by the read depth value. So we acquire a point in object space that definitely is located on geometry (it is also possible that at this pixel is no geometry but background color rendered, what would have been indicated by a depth value of 1.0 and is to be discarded).

The set of 3D offset-vectors has to be jittered to avoid aliasing artefacts in occlusion values of nearby pixels. We used the method described by [Mit07] and reflect the vector set at a plane random for every pixel to gain an individual sampling pattern.

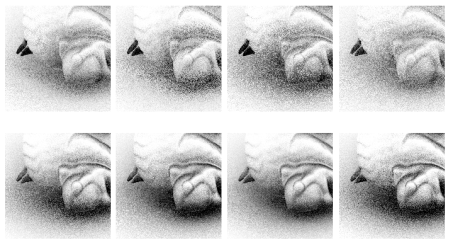

The better visual quality of occlusion using object space sampling, is derived from a higher sampling concentration within the visible hemisphere. [Fil08] flips an offset-vector during sampling if it points outside the visible hemisphere of the point of interest P. Here it is used to avoid self occlusion artefacts. Although our approch avoids those artefacts, we use the flipping instead to concentrate the sample points within the visible hemisphere and thus detect more occluders. The following figure shows the quality difference of our approach using image space or object space sampling.

References

[Fil08] D. Filion and R. McNaughton. Effects & techniques. In SIGGRAPH ™08: ACM SIGGRAPH 2008 classes, pages 133-164, New York, NY, USA, 2008. ACM. doi: http://doi.acm.org/10.1145/1404435.1404441.

[Mit07] M. Mittring. Finding next gen: Cryengine 2. In SIGGRAPH ™07: ACM SIGGRAPH 2007 courses, pages 97-121, New York, NY, USA, 2007. ACM. doi: http://doi.acm.org/10.1145/1281500.1281671.